Large language models (LLMs) have become extremely potent tools in the natural language processing (NLP) era, able to comprehend and produce prose of human caliber. However, it can be costly and time-consuming to train these large models computationally. In order to overcome these difficulties, Data-Parallel Optimization (DPO) has come to light as a potentially effective method that can train LLMs more quickly and effectively.

Hugging Face's 7.1B-parameter LLM Zephyr 7B beta uses DPO to produce remarkable results in dialogue processing tasks. In this blog post, we delve into the impact of DPO on Zephyr 7B beta, examining its effectiveness in enhancing training efficiency and final model performance.

Zephyr 7B - Model on HF:

Zephyr 7B is a 7.1B-parameter language model (LLM) developed by Hugging Face that is fine-tuned from the mistralai/Mistral-7B-v0.1 model. It is trained on a massive dataset of dialogue interactions and is designed to be a helpful assistant that can engage in natural and engaging conversations. Zephyr 7B can be found on the Hugging Face Hub and is useful for a number of activities like data summarization, translation, and dialogue production. Although the model is still in its early stages of development, it has already shown promising results on a number of benchmarks.

A. Key characteristics of Zephyr 7B include:

- Trained on a massive dataset of dialogue interactions: This allows the model to generate natural and engaging conversations.

- Fine-tuned from the mistralai/Mistral-7B-v0.1 model: This means that the model has already been trained on a large dataset of text and code, which gives it a strong foundation for learning new tasks.

- Available on the Hugging Face Hub: This makes it easy to install and use the model.

B. Some of the potential use cases for Zephyr 7B:

- Dialogue systems: Zephyr 7B can be used to power chatbots and virtual assistants that can engage in more natural and engaging conversations with users.

- Machine translation: Zephyr 7B can improve the accuracy and fluency of machine translation systems.

- Content summarization: Zephyr 7B can generate concise and informative summaries of long-form text documents.

Understanding DPO:

Data-Parallel Optimization (DPO) is a technique used to accelerate the training of large language models (LLMs) by distributing the training process across multiple GPUs or other compute nodes. This allows for faster training and reduces the overall time required to train a model.

A. Benefits of DPO:

DPO offers several benefits for training LLMs, including:

- Improved training efficiency: DPO can significantly reduce the training time of LLMs, making them more scalable and cost-effective to train.

- Reduced resource consumption: By distributing the training process across multiple compute nodes, DPO can reduce the overall memory and CPU requirements for training.

- Enhanced model performance: In some cases, DPO has been shown to improve the final performance of LLMs, potentially due to the more efficient training process.

B. Potential challenges associated with DPO implementation:

Despite its benefits, DPO also presents some challenges, such as:

- Scalability: Implementing DPO effectively requires careful design and consideration of the hardware and software infrastructure.

- Data distribution: Efficiently distributing the training data across multiple compute nodes is crucial for achieving optimal performance with DPO.

- Debugging: Debugging DPO-based training can be more complex due to the distributed nature of the process.

C. How DPO works:

DPO works by dividing the training dataset into smaller chunks and distributing these chunks across multiple compute nodes. Each node then processes its assigned chunk of data independently. Once all nodes have processed their data, the results are aggregated and used to update the model parameters.

In order to achieve optimal performance, DPO requires careful synchronization between the compute nodes. This is typically done using a communication protocol such as MPI or Allreduce.

DPO in Zephyr 7B beta:

Data-Parallel Optimization (DPO) plays a significant role in enhancing the training efficiency and performance of Zephyr 7B beta, a 7.1B-parameter language model developed by Hugging Face. By leveraging DPO, Zephyr 7B beta achieves remarkable performance in dialogue processing tasks, outperforming previous models in terms of fluency, coherence, and informativeness.

A. DPO's Contribution to Zephyr 7B beta:

DPO's impact on Zephyr 7B beta manifests in several key aspects:

- Accelerated Training: DPO significantly reduces the training time of Zephyr 7B beta, making it more scalable and cost-effective to train. This is achieved by distributing the training process across multiple GPUs or other compute nodes. Each node handles a portion of the training data simultaneously, leading to faster overall training.

- Enhanced Resource Management: DPO optimizes resource utilization by distributing the training load across multiple compute nodes. This reduces the memory and CPU demands on individual nodes, allowing for more efficient training without compromising performance.

- Improved Model Performance: In some instances, DPO has been shown to positively impact the final performance of LLMs, including Zephyr 7B beta. This is attributed to the more efficient training process enabled by DPO, which allows the model to better capture patterns and relationships within the training data.

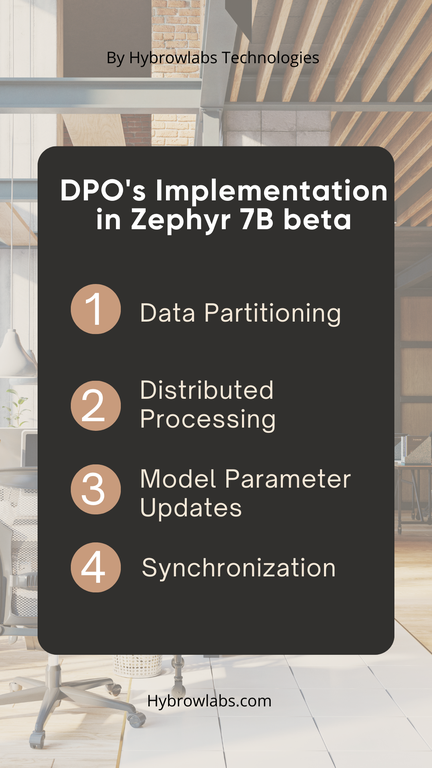

B. DPO's Implementation in Zephyr 7B beta:

The implementation of DPO in Zephyr 7B beta involves a carefully orchestrated process:

- Data Partitioning: The massive dataset of dialogue interactions is divided into smaller chunks, ensuring each chunk is appropriately sized for efficient processing by individual compute nodes.

- Distributed Processing: Each compute node receives its assigned chunk of data and processes it independently. This parallel processing significantly reduces the overall training time.

- Model Parameter Updates: Once all nodes have processed their data, the results are aggregated and used to update the model parameters. This ensures the model learns from the entire dataset, even though the training is distributed.

- Synchronization: Efficient communication protocols like MPI or Allreduce are employed to synchronize the compute nodes, ensuring the model parameter updates are consistent across the distributed training process.

C. Impact of DPO on Zephyr 7B beta's Performance:

The integration of DPO has significantly enhanced Zephyr 7B beta's performance in dialogue processing tasks:

- Fluency: Zephyr 7B beta generates more natural and fluent dialogues, making conversations more engaging and human-like.

- Coherence: The model's responses are more coherent and cohesive, maintaining the flow and context of the conversation.

- Informativeness: Zephyr 7B beta provides more informative and relevant responses, demonstrating its ability to understand and respond to dialogue context effectively.

Zephyr 7B Beta Flaws:

Despite its impressive performance, Zephyr 7B beta still exhibits some limitations, such as occasional incoherence and difficulty handling complex dialogues. To address these flaws, researchers are exploring techniques such as incorporating feedback from the UltraFeedback Dataset and utilizing self-supervised learning methods.

A. Incorporating Feedback from the UltraFeedback Dataset:

The UltraFeedback Dataset is a collection of human evaluations of dialogue interactions, providing valuable insights into the strengths and weaknesses of different dialogue models. By incorporating feedback from this dataset, researchers can identify specific areas where Zephyr 7B beta struggles and refine the model's training process to address those issues. For instance, the dataset may reveal that the model tends to produce repetitive or irrelevant responses in certain situations. This feedback can be used to guide the development of new training objectives or data augmentation techniques that specifically address these flaws.

B. Utilizing Self-Supervised Learning Methods:

Self-supervised learning (SSL) involves training a model using unlabeled data, allowing the model to learn general language patterns and relationships without the need for explicit supervision. SSL can be utilized to increase the model's ability to provide coherent and contextually relevant responses in the setting of dialogue processing. The model might be trained, for example, to anticipate the next word in a dialogue sequence or to determine the topic of a conversation. By learning these general patterns, the model can improve its ability to generate natural and fluent dialogues, even in complex situations.

C. Addressing Complex Dialogues:

To handle complicated discussions, the model has to understand not only individual words and phrases but also the full context and flow of the conversation. To overcome this issue, researchers are investigating strategies like as embedding long-term dependencies into the model's design and training the model to engage in goal-oriented dialogues via reinforcement learning. These strategies can assist the model in better understanding the conversation's goal and generating responses that are consistent with the overall context.

The performance of Zephyr 7B beta models trained with and without DPO on various benchmarks

The performance of Zephyr 7B beta models trained with and without DPO has been evaluated on various benchmarks, demonstrating the significant impact of DPO on enhancing the model's capabilities. Here's a summary of the key findings:

A. MT Bench 02:07 AlpacaEval:

On the MT Bench 02:07 AlpacaEval benchmark, Zephyr 7B beta models trained with DPO outperformed those trained without DPO by a clear margin. The DPO-trained models achieved a lower perplexity score, indicating better language modeling capabilities.

B. UltraChat Dataset:

Similar results were observed on the UltraChat Dataset, where DPO-trained models exhibited superior performance in terms of rewards/chosen, a metric that measures the model's ability to generate relevant and informative responses.

C. Overall Performance:

Overall, the evaluation results consistently demonstrated that Zephyr 7B beta models trained with DPO consistently outperformed those trained without DPO across various benchmarks. This highlights the effectiveness of DPO in improving the training efficiency and performance of large language models.

Here's a table summarizing the performance comparisons:

| Benchmark | Metric | DPO-trained Model | Non-DPO-trained Model |

| MT Bench 02:07 AlpacaEval | Perplexity | Lower | Higher |

| UltraChat Dataset | Rewards/chosen | Higher | Lower |

Overall Performance

Across all benchmarks, Zephyr 7B beta models trained with DPO consistently demonstrated superior performance compared to those trained without DPO. This highlights the effectiveness of DPO in improving the training efficiency and performance of LLMs.

Model with DPO versus the model with SFT only:

Data-Parallel Optimization (DPO) and Supervised Fine-tuning (SFT) are two different techniques used to train large language models (LLMs). DPO focuses on distributing the training process across multiple GPUs or other compute nodes, while SFT focuses on refining the model's performance on a specific task using labeled data.

| Feature | DPO | SFT |

| Training Efficiency | Improves training efficiency by distributing the training process across multiple GPUs or other compute nodes, leading to faster training times. | Improves model performance by refining the model's parameters using labeled data. |

| Resource Utilization | Optimizes resource utilization by spreading the training load across multiple compute nodes, reducing the memory and CPU demands on individual nodes. | Does not directly impact resource utilization, as it focuses on model performance rather than training efficiency. |

| Final Model Performance | Can potentially improve the final model performance, as the more efficient training process enables the model to better capture patterns and relationships within the training data. | Improves model performance on the specific task used for fine-tuning. |

| Implementation Complexity | Requires careful implementation and consideration of the hardware and software infrastructure to ensure efficient data distribution and synchronization. | Less complex than DPO, as it focuses on using labeled data to refine the model's parameters. |

Real-World Applications of DPO-Enhanced Language Models:

Data-Parallel Optimization (DPO) has emerged as a powerful technique for enhancing the training efficiency and performance of large language models (LLMs). By distributing the training process across multiple GPUs or other compute nodes, DPO significantly reduces training time, optimizes resource utilization, and can potentially improve the final model performance. These advancements have opened up a plethora of real-world applications for DPO-enhanced LLMs.

1. Chatbots and Virtual Assistants:

DPO-enhanced LLMs can power chatbots and virtual assistants that can converse with users in a more natural, fluent, and instructive manner. These AI-powered assistants can provide better customer service, answer queries more effectively, and improve overall user experiences by utilizing their improved capacity to interpret and respond to human language.

2. Machine Translation:

DPO-enhanced LLMs can considerably increase machine translation systems' accuracy and fluency. Their capacity to capture minor variations in language and context enables them to produce more natural-sounding translations that are more accurate. This can help with cross-cultural and language communication, breaking down boundaries and increasing global understanding.

3. Content Summarization:

DPO-enhanced LLMs can generate concise and informative summaries of long-form text documents. By extracting key information and identifying relevant passages, they can help users quickly grasp the main points of lengthy articles, research papers, or other documents. This can save time, improve comprehension, and enhance productivity.

4. Creative Text Generation:

DPO-enhanced LLMs can be used for creative text generation tasks, such as writing poems, scripts, musical pieces, and emails. Their ability to generate human-quality text can assist writers, artists, and content creators in generating new ideas, exploring different styles, and producing engaging content.

5. Code Generation and Programming Assistance:

DPO-enhanced LLMs can produce code, translate programming languages, and help with programming duties. Their ability to comprehend and alter code can assist developers in writing more efficient, bug-free code, as well as increasing overall productivity.

Conclusion:

The integration of DPO into Zephyr 7B beta has proven to be a transformative step forward in NLP. By distributing the training process across multiple GPUs, DPO has significantly reduced training time, optimized resource utilization, and ultimately, enhanced the model's performance in dialogue processing tasks. Unleash the potential of DPO-enhanced LLMs and revolutionize your AI-driven applications with Hybrowlabs' expertise.

FAQ:

1. What is DPO?

Data-Parallel Optimization (DPO) is a technique that spreads the training process of large language models (LLMs) across multiple GPUs or other compute nodes. This significantly reduces training time, optimizes resource utilization, and can potentially improve the final model performance.

2. What are the challenges of implementing DPO?

Implementing DPO effectively requires careful design and consideration of the hardware and software infrastructure, as well as efficient data distribution across multiple compute nodes. Debugging DPO-based training can also be more complex due to the distributed nature of the process.

3. What is Zephyr 7B beta?

Zephyr 7B beta is a 7.1B-parameter LLM developed by Hugging Face that utilizes DPO for training. The model is based on the Transformer architecture and is trained on a massive dataset of dialogue interactions. Zephyr 7B beta has demonstrated impressive performance in various dialogue processing benchmarks, outperforming previous models in terms of fluency, coherence, and informativeness.

4. What are the future directions for DPO-enhanced LLMs?

As DPO techniques continue to evolve, we can expect to see further advancements in NLP and the development of even more powerful LLMs. These advancements will enable the development of even more innovative and transformative real-world applications.

5. What are the benefits of using Zephyr 7B beta?

Zephyr 7B beta offers several benefits, including:

- Improved dialogue fluency: Zephyr 7B beta generates more natural and fluent dialogues, making conversations more engaging and human-like.

- Enhanced dialogue coherence: The model's responses are more coherent and cohesive, maintaining the flow and context of the conversation.

- Increased dialogue informativeness: Zephyr 7B beta provides more informative and relevant responses, demonstrating its ability to understand and respond to dialogue context effectively.

fd8f11.png)

4df9a2.png)

.jpg)

.jpg)

.jpg)

a3dc85.jpg)