AI-Augmented Helpdesk: Using AI and Helpdesk Together

AI-Augmented Helpdesk on Azure: Architecture and Workflows Using Azure AI Foundry

Hybrowlabs Technologies Pvt Ltd Internal White Paper — March 2026

Executive Summary

Modern helpdesk operations face a compounding challenge: ticket volumes scale with the business, but skilled support agents do not. The instinctive response — hiring more L1 staff — increases cost without improving quality or speed.

This white paper presents a practical architecture for AI-augmented helpdesk operations built on Microsoft Azure AI Foundry. It describes how Azure's native AI, integration, and storage services can be combined to create an intelligent support layer that drafts responses, retrieves relevant knowledge, and routes approvals to human agents — without removing human judgment from the loop.

The guiding principle throughout: agency stays with the human. Azure AI assists; humans decide.

1. The Problem: Manual Support at Scale

1.1 The Volume-Quality Trap

At most ERPNext / SaaS helpdesks, ticket composition looks approximately like this:

| Ticket Type | Estimated % | Nature |

|---|---|---|

| Standard replies (policy, FAQ) | 40–50% | Templated response, minimal judgment needed |

| History-based replies | 20–30% | Pattern-matched to prior similar tickets |

| Action-required tickets | 20–30% | Requires a human to act in the system |

| Complex / escalation tickets | 5–10% | Requires senior judgment |

50–70% of tickets can be handled with AI assistance. The remaining 30–50% still need a human — but that human's time is now concentrated on work that requires genuine judgment.

1.2 The Agent Bottleneck

The specific failure pattern observed:

- A senior agent becomes the de-facto knowledge holder

- She manually reads every ticket, composes replies, sends them

- Junior agents lack the knowledge or confidence to respond independently

- The senior agent becomes a single point of failure — nothing moves without her

This is not a people problem. It is an architecture problem — one Azure AI Foundry is purpose-built to solve.

2. Solution Architecture: Azure AI Foundry Stack

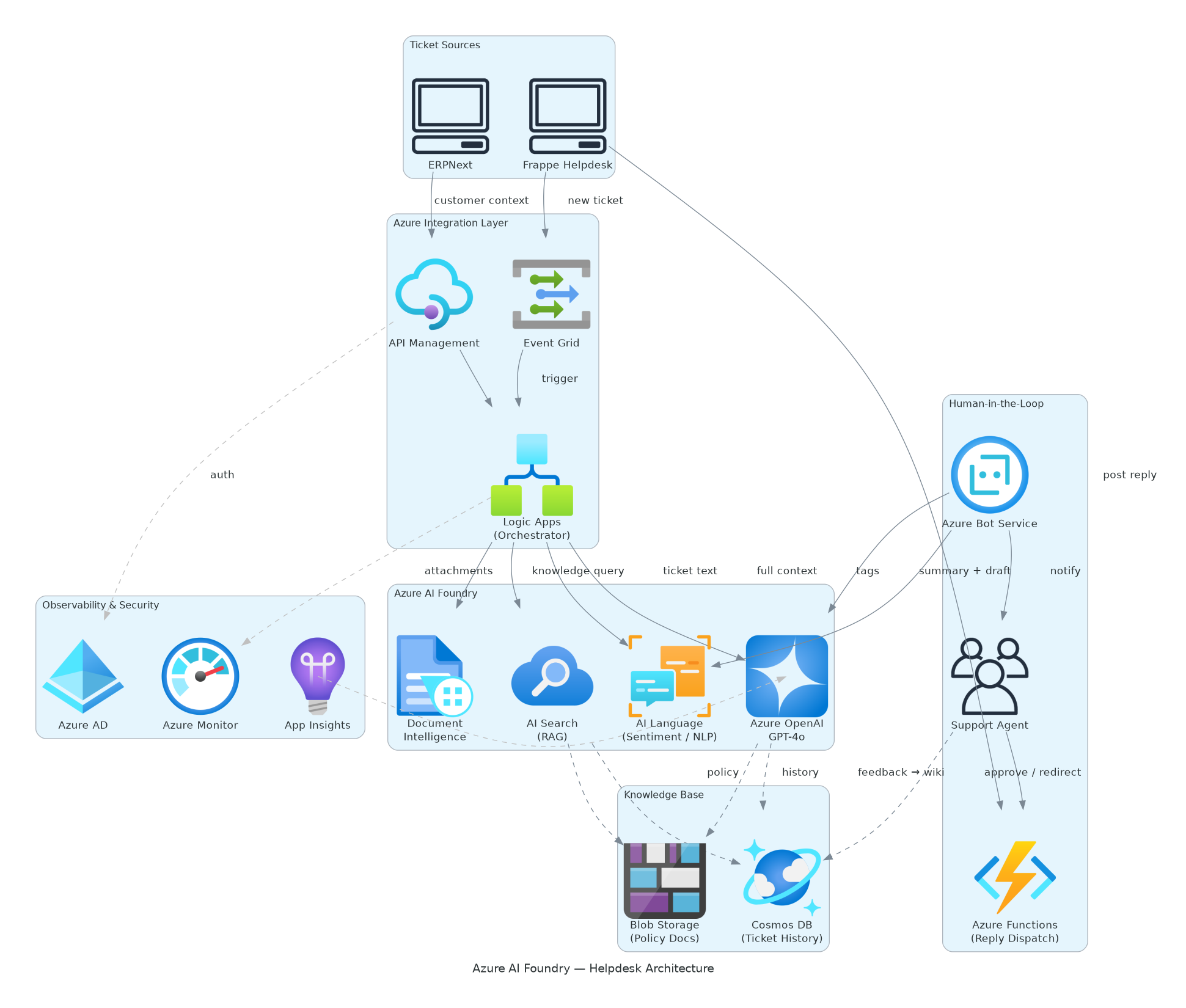

2.1 Architecture Diagram

2.2 Layer-by-Layer Breakdown

The architecture is organised into five layers:

Layer 1 — Ticket Ingestion

| Component | Role |

|---|---|

| Frappe Helpdesk | Primary ticket source — new tickets, replies, escalations |

| ERPNext | Customer master, project data, order history, account status |

Frappe HD raises an event on every new/updated ticket. ERPNext exposes customer context via its REST API.

Layer 2 — Azure Integration Layer

| Component | Role |

|---|---|

| Azure Event Grid | Event-driven trigger: fires when a new HD ticket arrives |

| Azure Logic Apps | Orchestration engine — coordinates the full ticket-to-reply workflow |

| Azure API Management | Secure API gateway for all ERPNext ↔ Azure data exchanges |

Azure Logic Apps is the central nervous system. It receives the ticket event, calls ERPNext for customer context, fans out to AI processing services, aggregates results, and routes the draft reply to the agent.

Logic Apps provides a no-code / low-code orchestration layer — business logic changes (routing rules, escalation triggers, SLA thresholds) can be updated without engineering involvement.

Layer 3 — Azure AI Foundry Processing Layer

This is the intelligence core. Four Azure AI services work in concert:

| Service | Role in Workflow |

|---|---|

| Azure OpenAI Service (GPT-4o) | Reads ticket + context + knowledge snippets → generates a one-paragraph summary + draft reply |

| Azure AI Language | Extracts entities (customer name, product, error code), detects sentiment (frustrated, urgent, neutral), classifies ticket category |

| Azure Document Intelligence | Parses PDF / image attachments in tickets — extracts structured text for GPT-4o to reason over |

| Azure AI Search | Semantic vector search over the knowledge base — retrieves the 3–5 most relevant policy snippets and past replies |

How they compose:

| Step | Service | Output |

|---|---|---|

| 1 | Ticket Text + Attachment (input) | Raw ticket content |

| 2 | Azure AI Language | Entities, sentiment score, ticket category |

| 2 | Azure Document Intelligence | Structured text extracted from PDF/image attachments |

| 3 | Azure AI Search | Top-K semantically relevant knowledge base matches |

| 4 | Azure OpenAI — GPT-4o | Receives all of the above as context → generates ticket summary + draft reply |

GPT-4o's system prompt is explicitly bounded: - Only use ERPNext data + indexed knowledge base - Do not browse the web or infer from outside sources - Always be formal and respectful - If unsure, escalate — never guess

Layer 4 — Knowledge Base & Storage

| Component | Role |

|---|---|

| Azure Blob Storage | Stores raw policy documents, uploaded SOPs, ticket attachment files |

| Azure Cosmos DB | Stores ticket history, curated FAQ entries, AI-generated wiki drafts, vector embeddings (via integrated vector search) |

| Frappe Wiki | Human-curated knowledge — agents review and approve AI-generated articles here before they enter the active knowledge base |

The separation between Cosmos DB (raw / AI-generated) and Frappe Wiki (human-approved) is deliberate. No raw historical data feeds the AI directly. Everything passes through human curation first.

Layer 5 — Human-in-the-Loop Layer

| Component | Role |

|---|---|

| Azure Bot Service | Delivers ticket summaries + draft replies to the support agent via Microsoft Teams, WhatsApp, or Telegram |

| Support Agent | Reviews the AI-generated package, approves or redirects via voice note or text |

| Azure Functions | Serverless dispatcher — receives agent approval, posts the final reply back to Frappe HD |

The agent never needs to open the helpdesk to handle a standard ticket. They receive a structured notification:

📌 New Ticket: Login Issue — Meril Life (VIP)

🔴 Sentiment: Frustrated | Category: Access Management📋 Context: 2 open orders, last contacted 4 days ago, premium tier

📝 Suggested Reply:

"Dear [Name], thank you for reaching out. We have escalated your login issue to our access management team and will resolve this within 2 hours. In the meantime..."→ [Send ✅] [Edit & Send ✏️] [Escalate 🔺]

The agent taps one button. Azure Functions posts the reply. The entire interaction takes under 30 seconds.

Layer 6 — Observability

| Component | Role |

|---|---|

| Azure Monitor + Application Insights | End-to-end tracing of every ticket through the pipeline; SLA dashboards; failure alerts |

| Azure Active Directory | Identity and access management — all service-to-service calls authenticated; agent permissions scoped |

3. Workflows

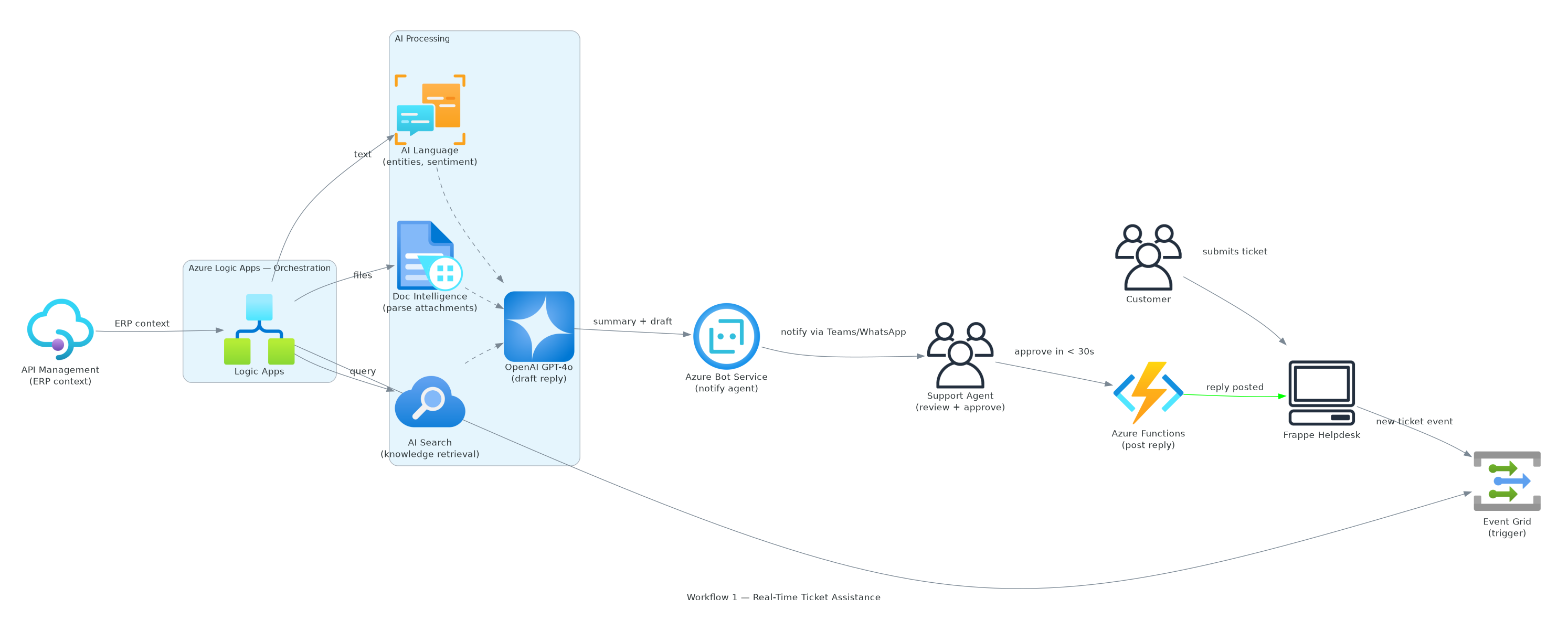

Workflow 1: Real-Time Ticket Assistance

- Customer submits ticket on Frappe HD

- Azure Event Grid fires "new ticket" event

- Azure Logic Apps orchestrates:

- Fetch customer context from ERPNext via API Management

- Send ticket text to Azure AI Language → get entities + sentiment

- Send attachments to Azure Document Intelligence → extract text

- Query Azure AI Search → retrieve top-5 knowledge base matches

- Send all to Azure OpenAI (GPT-4o) → generate summary + draft reply

- Azure Bot Service pushes package to agent (Teams / WhatsApp)

- Agent reviews → taps Approve / Edit / Escalate

- Azure Functions posts approved reply to Frappe HD

- Ticket marked Replied; SLA timer updated

SLA target: From ticket submission to agent notification — under 60 seconds.

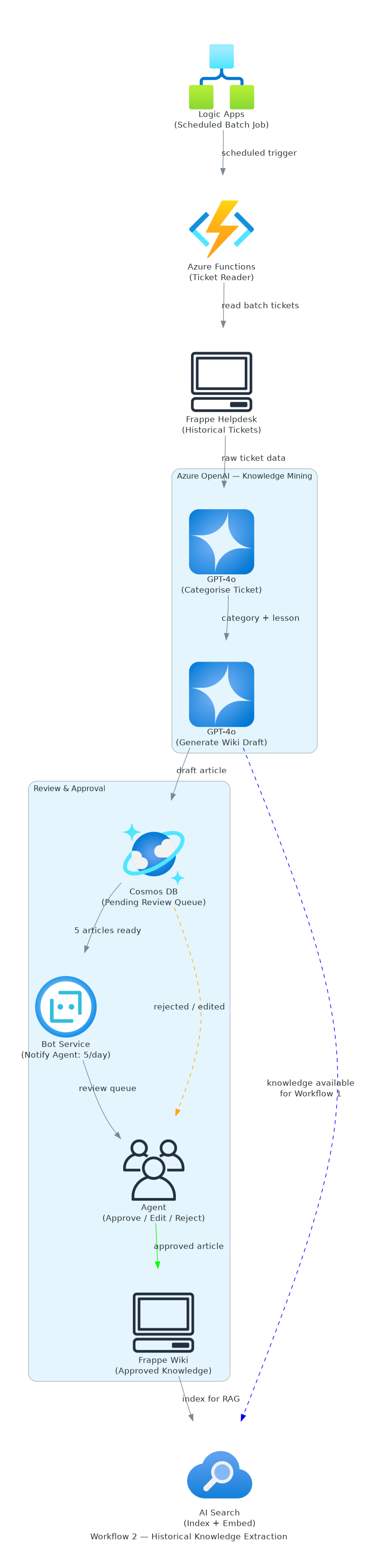

Workflow 2: Historical Knowledge Extraction

This is a batch workflow that runs periodically (daily or weekly) to convert the historical ticket corpus into a curated knowledge base.

- Azure Logic Apps triggers batch job (scheduled)

- Azure Functions reads a batch of tickets from Frappe HD (oldest unprocessed first)

- For each ticket:

- Azure OpenAI categorises the ticket type

- Azure OpenAI extracts the core lesson — what was the correct answer?

- Draft wiki article generated (one per ticket category cluster)

- Draft articles stored in Azure Cosmos DB (pending review queue)

- Azure Bot Service notifies agent: "5 wiki articles ready for review"

- Agent reviews in Frappe Wiki → Approve / Edit / Reject

- Approved articles indexed in Azure AI Search (vector embeddings)

- Knowledge base grows; future Workflow 1 draft quality improves automatically

Volume: ~5 wiki articles per day for human review — a manageable workload.

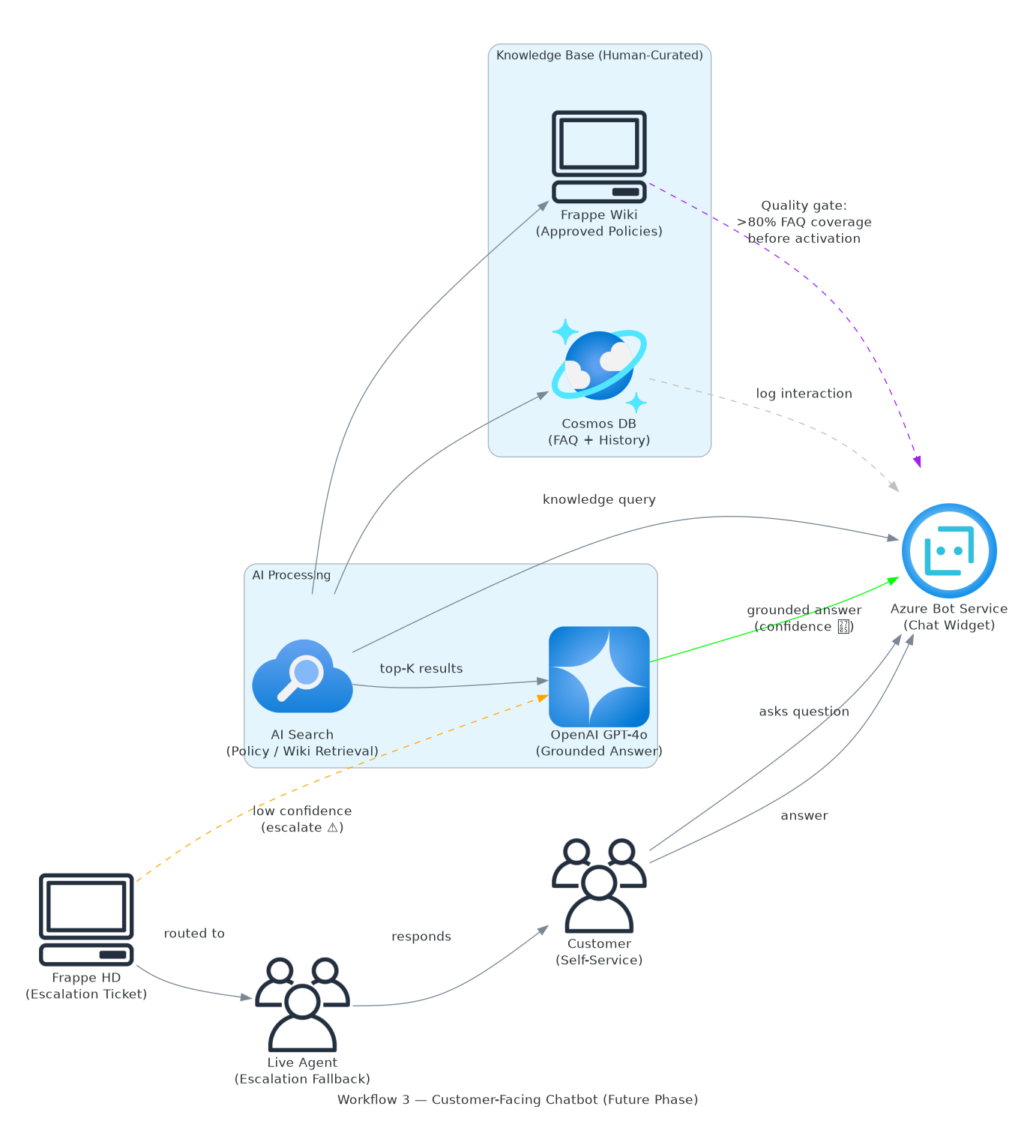

Workflow 3: Customer-Facing Chatbot (Future Phase)

Once the knowledge base reaches sufficient quality and coverage:

- Azure Bot Service exposes a public chat widget (embedded on customer portal)

- Customer asks a question

- Azure AI Search retrieves relevant wiki/policy entries

- Azure OpenAI generates a grounded answer

- If confidence is low → escalate to live agent

- All interactions logged to Cosmos DB → feed back into Workflow 2

This phase is gated behind a policy quality threshold — the chatbot is not activated until the human-curated knowledge base is sufficient to answer >80% of common queries correctly.

4. Data Governance

4.1 What the AI Can Access

| Source | Access | Notes |

|---|---|---|

| Frappe HD ticket data | ✅ Full read | Current + historical via Logic Apps |

| ERPNext customer/project data | ✅ Scoped read | Via API Management (field-level filtering) |

| Azure Cosmos DB (knowledge base) | ✅ Full read/write | AI reads; humans write/approve |

| Frappe Wiki (approved policies) | ✅ Full read | Read-only for AI; humans edit |

| Azure Blob Storage | ✅ Read | Policy docs, ticket attachments |

| Internet / web search | ❌ Disabled | GPT-4o system prompt + network policy |

| External databases | ❌ Disabled | No outbound data access beyond defined sources |

4.2 Human Approval Gates

Every external action requires explicit agent approval:

| Action | Gate |

|---|---|

| Sending a reply to a customer | Agent tap/voice approval |

| Modifying an ERPNext record | Not permitted |

| Publishing a wiki article | Agent review + approval |

| Escalating a ticket | Agent action |

4.3 Azure Active Directory Scoping

- Service principals for each Azure service scoped to minimum required permissions

- Agent identities authenticated via AAD before receiving ticket notifications

- All API calls between ERPNext and Azure logged in APIM for audit

5. Azure AI Foundry Product Map

| Product | Azure Service | Tier Recommendation |

|---|---|---|

| Large Language Model | Azure OpenAI — GPT-4o | Standard S0 |

| Semantic Search / RAG | Azure AI Search | Standard S1 (vector search enabled) |

| NLP / Entity Extraction | Azure AI Language | Standard S |

| Document Parsing | Azure Document Intelligence | Standard S1 |

| Workflow Orchestration | Azure Logic Apps | Consumption plan |

| Serverless Compute | Azure Functions | Consumption plan |

| Event-Driven Triggers | Azure Event Grid | Per-operation pricing |

| API Security | Azure API Management | Developer / Standard |

| Knowledge Store | Azure Cosmos DB | Serverless |

| File Storage | Azure Blob Storage | LRS, Hot tier |

| Bot / Notification Interface | Azure Bot Service | F0 / S1 |

| Monitoring | Azure Monitor + App Insights | Included in services |

| Identity | Azure Active Directory | Included in Azure tenant |

6. Implementation Roadmap

Phase 1 — Integration Foundation (Weeks 1–2)

- [ ] Provision Azure tenant + resource group

- [ ] Set up Azure Event Grid subscription on Frappe HD webhook

- [ ] Configure Azure API Management for ERPNext REST API

- [ ] Deploy Azure Logic Apps skeleton (trigger → fetch context → log)

- [ ] Set up Azure Active Directory service principals

Outcome: Event-driven pipeline established. Tickets flow into Azure on creation.

Phase 2 — AI Processing Layer (Weeks 3–4)

- [ ] Deploy Azure OpenAI (GPT-4o) with bounded system prompt

- [ ] Configure Azure AI Language for ticket classification

- [ ] Set up Azure AI Search index (initial seed: last 200 best-practice replies)

- [ ] Connect Logic Apps to AI services

- [ ] Deploy Azure Bot Service → Teams / WhatsApp notification

Outcome: Agent receives AI-generated summary + draft reply for every new ticket.

Phase 3 — Knowledge Base Pipeline (Weeks 5–8)

- [ ] Deploy Cosmos DB knowledge store + vector index

- [ ] Build historical ticket mining Logic App (batch, scheduled)

- [ ] Build wiki article review queue in Frappe Wiki

- [ ] Enable Azure Document Intelligence for attachment parsing

- [ ] First pass: mine last 1,000 tickets → generate initial FAQ set

Outcome: Growing knowledge base. Draft quality improves week-over-week.

Phase 4 — Customer Chatbot (Month 3+)

- [ ] Evaluate knowledge base coverage (>80% FAQ hit rate threshold)

- [ ] Enable Azure Bot Service public-facing channel

- [ ] Embed chatbot widget on customer portal

- [ ] Monitor via Azure Monitor; set escalation fallback rules

Outcome: Tier-0 self-service deflects 20–30% of tickets before they reach an agent.

7. Expected Outcomes

| Metric | Baseline | Target (6 months) |

|---|---|---|

| Avg first response time | 4–8 hours | < 30 minutes |

| % tickets handled by L1 (AI-assisted) | ~20% | 60–70% |

| Senior agent hours on L1 tickets | ~70% of day | < 20% of day |

| Knowledge base articles (approved) | ~0 explicit | 300+ indexed |

| New agent onboarding time | 2–3 weeks | 3–5 days |

| Tier-0 chatbot deflection rate (Phase 4) | 0% | 20–30% |

8. Risks and Mitigations

| Risk | Mitigation |

|---|---|

| GPT-4o hallucinates an answer | Bounded system prompt + Azure AI Search grounding; agent approval gate |

| Historical tickets contain wrong answers | Human curation gate — raw history never directly used |

| Agent over-relies on AI | Draft always shows AI reasoning; agent must explicitly approve |

| PII in ticket data | Azure AI Language PII detection; field-level masking in APIM |

| Knowledge base becomes stale | Weekly review queue; new corrections from live tickets feed proposal queue |

| Azure service outages | Azure Monitor alerts; fallback to direct HD access for critical escalations |

9. The Broader Principle

This architecture is a template for deploying AI across any knowledge-intensive business operation:

- Azure Event Grid makes any system event-driven — not just helpdesk

- Azure Logic Apps is the orchestration layer for any multi-step AI workflow

- Azure OpenAI + AI Search (RAG pattern) can be applied to HR, Finance, Sales, Project Management

- Human curation as a quality gate prevents compounding errors in AI output

- Azure Bot Service brings AI to wherever the human already works (Teams, WhatsApp)

The same stack can power: CV screening (HR), contract review (Legal), expense anomaly detection (Finance), and project status drafting (PMO) — all with the same human-in-the-loop principle.

Appendix: Full Azure Service List

| Service | Purpose |

|---|---|

| Azure OpenAI Service (GPT-4o) | LLM for summarisation and reply generation |

| Azure AI Search | Vector + semantic knowledge retrieval (RAG) |

| Azure AI Language | NLP: sentiment, entities, classification |

| Azure Document Intelligence | PDF/image attachment parsing |

| Azure Logic Apps | Workflow orchestration |

| Azure Functions | Serverless reply dispatch |

| Azure Event Grid | Event-driven triggers |

| Azure API Management | Secure API gateway for ERPNext |

| Azure Cosmos DB | Knowledge store + vector embeddings |

| Azure Blob Storage | Document and attachment storage |

| Azure Bot Service | Agent notification interface |

| Azure Monitor + App Insights | Observability and SLA tracking |

| Azure Active Directory | Identity, access, and audit |

Prepared by Rez (AI Assistant, Hybrowlabs) — March 2026 Contact: chinmay.kulkarni@hybrowlabs.tech