Infrastructure & Scalability

1. Deployment & Hosting Options

Summary: Frappe/ERPNext is uniquely flexible — it runs on SaaS (Frappe Cloud), any public cloud (AWS/Azure/GCP), on-premise Linux servers, or hybrid setups. There is zero vendor lock-in because the source code is fully open.

- ✅ SaaS (Frappe Cloud): Managed hosting at cloud.frappe.io. Frappe handles infrastructure, patches, backups. Unlimited users pricing model.

- ✅ On-premise: Self-hosted on Ubuntu/Debian Linux. Full control. Recommended for sensitive data industries.

- ✅ Public Cloud: AWS, Azure, GCP, DigitalOcean, Linode, Hetzner — all supported. Docker + Kubernetes deployment available.

- ✅ Indian Cloud Vendors: Deployable on Yotta, NxtGen, BSNL Cloud, Tata TCS Cloud, AWS Mumbai/Hyderabad regions — full data residency in India achievable.

- ✅ Hybrid: Multiple bench instances can federate data via Event Streaming (Kafka/built-in v12+).

- ✅ Data Residency: On-premise or Indian-region cloud deployments ensure data never leaves India.

- ✅ HA Architecture: Master-replica MariaDB, multiple Gunicorn workers, Redis clustering, NGINX load balancer.

- ⚠️ Frappe Cloud SLA: Inherits AWS/OCI SLA (~99.99% multi-AZ); Frappe's own hosted SLA doesn't explicitly guarantee 99.99%.

- ✅ DR: Frappe Cloud backs up to Amazon S3 offsite. Self-hosted can use rsync + S3/GCS/Azure Blob.

- 🔧 Managed HA/DR: Requires proper infra configuration for self-hosted; Frappe Cloud handles this automatically.

Architecture: Four Deployment Options

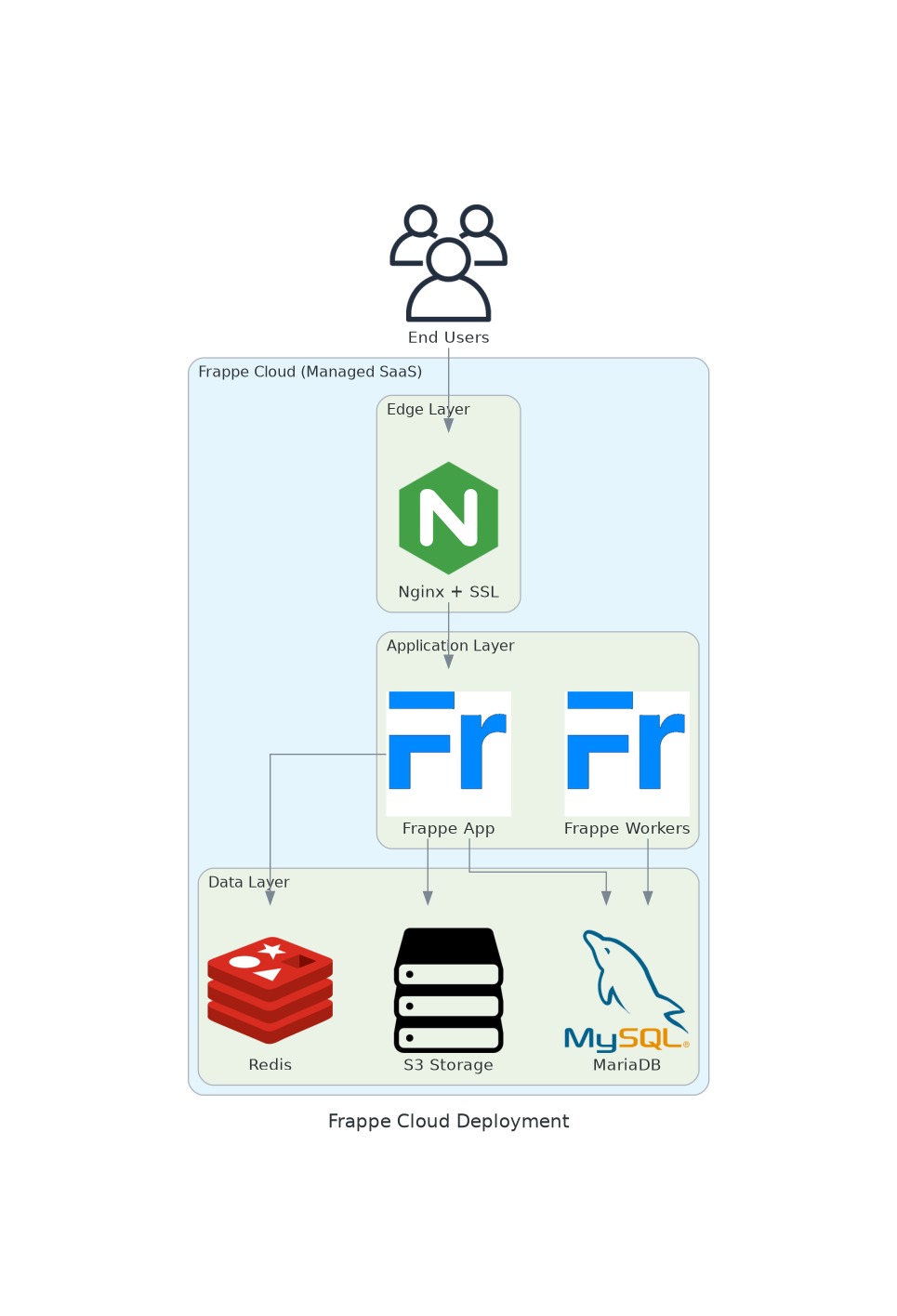

Option A — Frappe Cloud (Managed SaaS)

🆕 Frappe Cloud now supports horizontal scaling. Multiple application server instances can be provisioned behind a shared load balancer, with stateless Frappe workers scaling independently to handle traffic spikes — all managed natively on the Frappe Cloud platform without manual Kubernetes configuration.

Frappe Cloud is a fully managed SaaS platform on AWS/OCI. Zero infrastructure management — deploy with a few clicks.

Key Points: Automatic S3 backups; Frappe handles patches/SSL/monitoring; multi-AZ availability; unlimited users pricing.

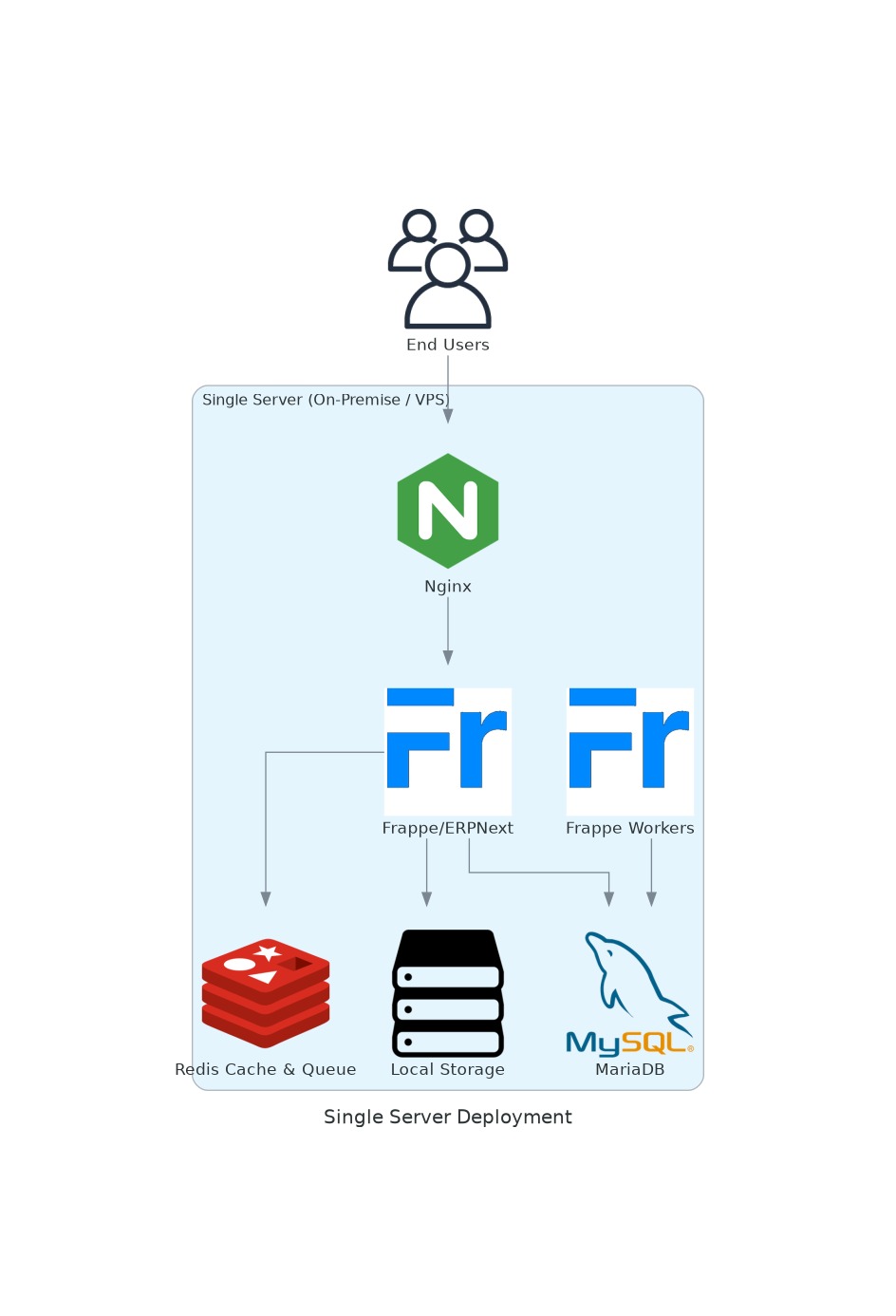

Option B — Single-Server Hosting (Self-Hosted VM)

All Frappe components on a single Linux VM. Common for SMBs, pilots, dev/test.

Key Points: Simple bench init setup; all components co-located; single point of failure; upgrade path to Press/Kubernetes as load grows.

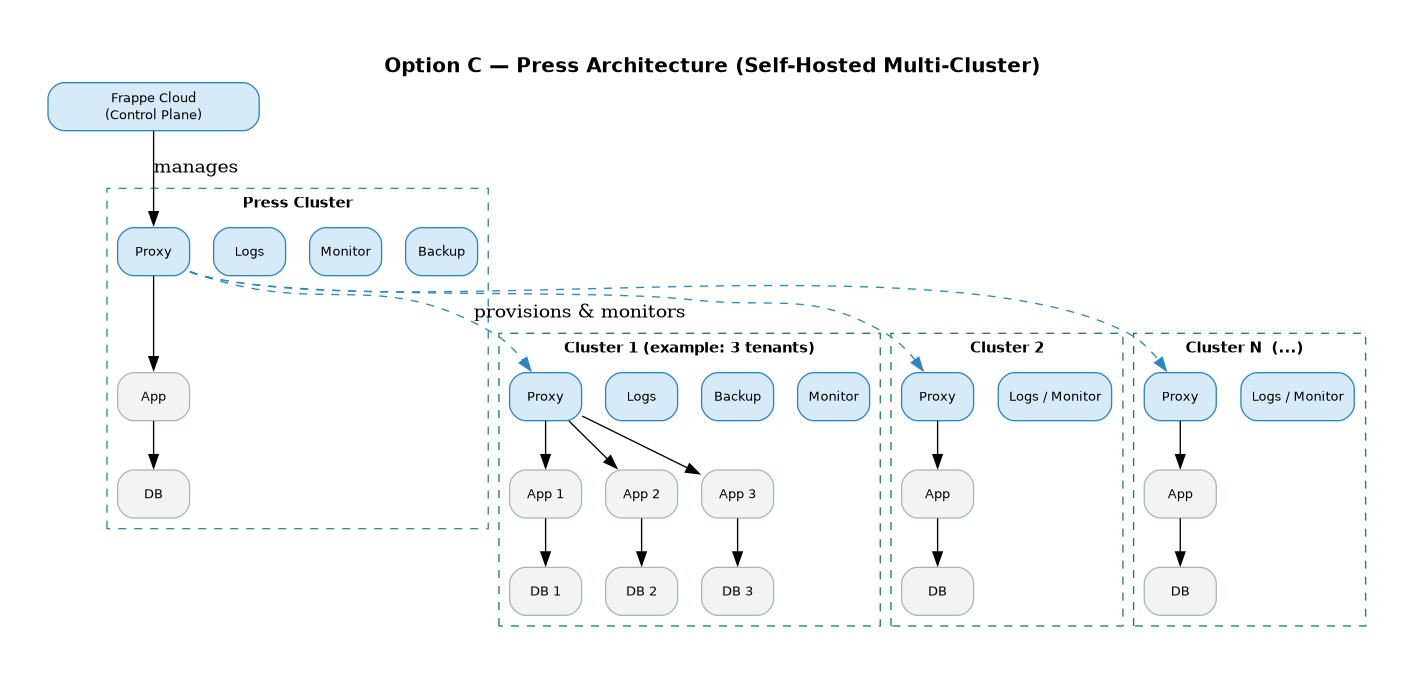

Option C — Press (Frappe's Open-Source Self-Hosting Platform)

Horizontal Scaling Limitations in Press

Press is designed for vertical scaling and multi-tenant isolation, not true horizontal scaling. Key limitations to be aware of:

- ❌ No shared DB across workers — each site has its own MariaDB instance. There is no connection pooling or shared data layer across tenants, making horizontal scaling of a single site complex

- ❌ No native Kubernetes support — Press manages VMs/bare-metal clusters, not container orchestration. Scaling out requires provisioning new servers manually via the Press dashboard

- ❌ Single app server per site — by default, each Frappe site runs on one server. Distributing a single site across multiple app nodes requires manual Nginx + shared NFS/S3 setup outside of Press

- ⚠️ Scaling = adding clusters — the Press model scales by adding new clusters (server groups), not by auto-scaling pods. This is coarser-grained than Kubernetes HPA

- ⚠️ No built-in load balancer per site — the Proxy in each cluster routes between sites, but does not load-balance a single high-traffic site across multiple workers natively

- ✅ Best for — self-hosted ERPNext SaaS providers, multi-client deployments where each client is isolated, teams who want Frappe Cloud-like management without handing over data to Frappe

For workloads requiring true horizontal scaling of a single site, Option D (Kubernetes) is the recommended path.

Press is Frappe's multi-tenant platform — the same technology powering Frappe Cloud, but self-hosted.

Key Points: Multi-tenant isolation per site; central management dashboard; best for hosting providers, universities, large enterprises with multiple entities; open source: https://github.com/frappe/press.

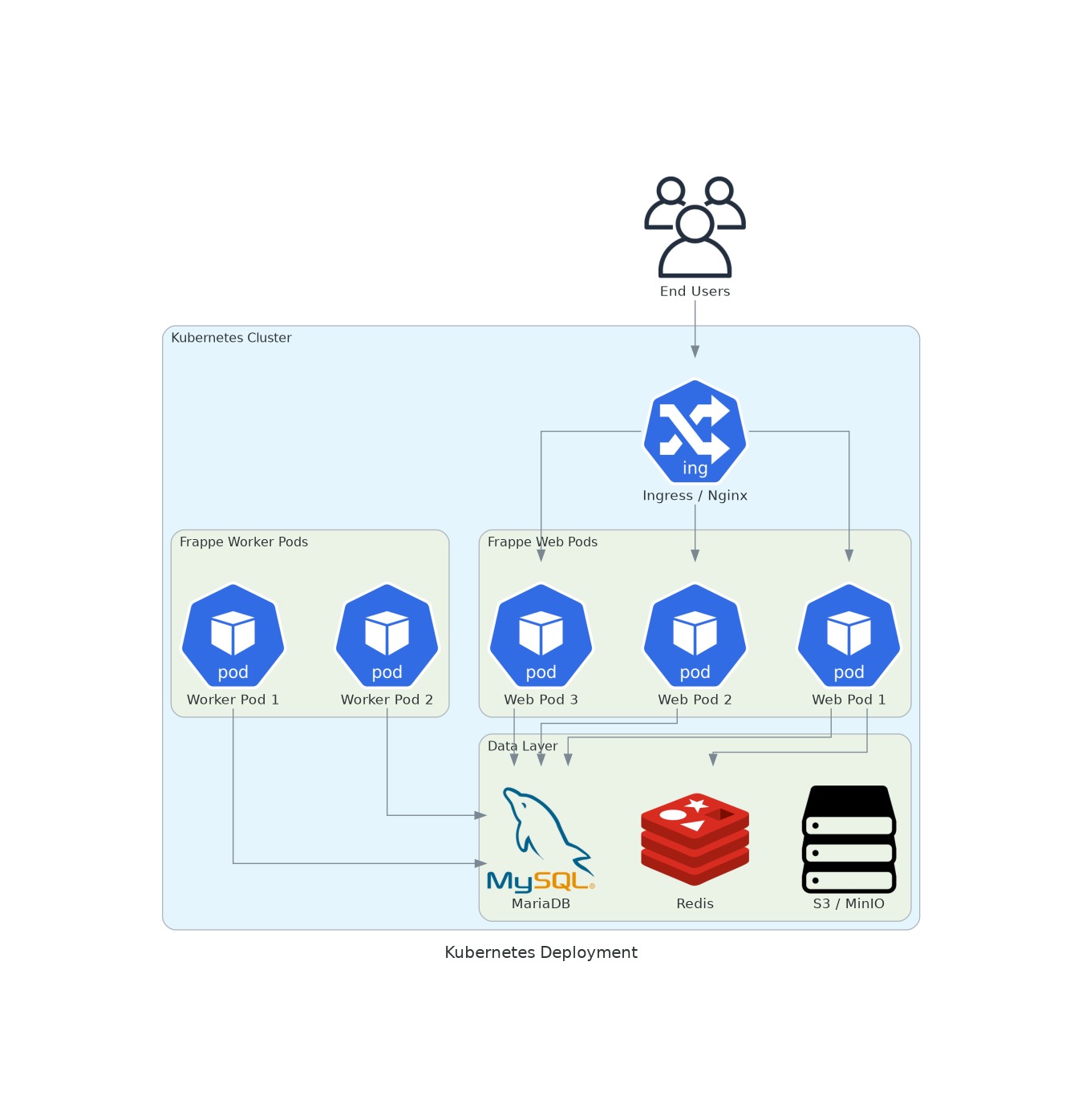

Option D — Kubernetes (Production-Grade Container Orchestration)

For large-scale deployments requiring auto-scaling, rolling updates, and fault tolerance.

Key Points: HPA auto-scales web/worker pods based on CPU/memory/queue depth; rolling updates with zero downtime; Helm chart: frappe/frappe-helm; stateful services use StatefulSets or managed cloud DBs (RDS, ElastiCache).

1a. Federation Architecture

Concept: Multiple independent Frappe instances (per branch, entity, or region) each managing their own data, with periodic aggregation to a central Mothership Instance via an ETL pipeline.

Use Case

Large enterprises or universities with distributed operations — e.g., with multiple departments/campuses, each running their own Frappe instance, feeding consolidated data to a central analytics/reporting instance.

Architecture Diagram

Key Points

- Each satellite instance is fully autonomous — outages in one don't affect others

- ETL pipeline runs on a schedule (hourly/nightly) or via event triggers

- Frappe's built-in Event Streaming (v12+) can serve as the near-real-time sync mechanism

- The Mothership is read-heavy — optimised for analytics, not transactions

- Data sovereignty: each instance can be in a different region/jurisdiction

2. Scalability

Summary: ERPNext scales horizontally using multiple app servers sharing a single MariaDB + Redis backend. The architecture supports thousands of concurrent users, multi-company, multi-branch, and multi-currency deployments natively.

- 🆕 Frappe Cloud Horizontal Scaling: Frappe Cloud natively supports horizontal scaling — spin up additional app server nodes on-demand without managing your own Kubernetes cluster

- ✅ Concurrent Users: Proven to 1,000+ concurrent users on Frappe Cloud; community deployments run 200+ concurrent users on dedicated servers.

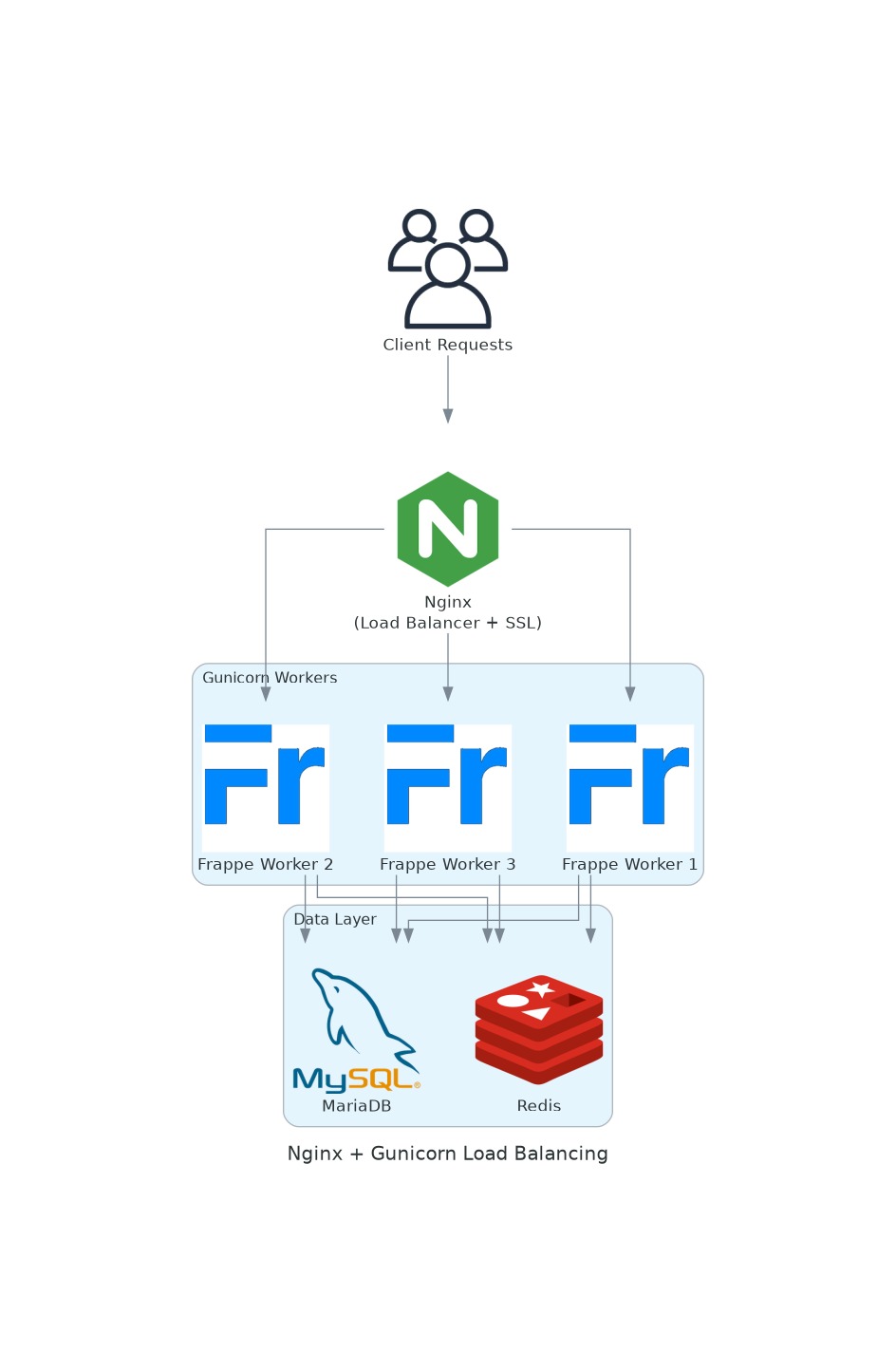

- ✅ Horizontal Scaling: Multiple Bench/Gunicorn instances behind NGINX load balancer, sharing a common MariaDB cluster.

- ✅ Vertical Scaling: Scale up MariaDB RAM/CPU; tune innodbbufferpoolsize, maxconnections.

- ✅ Multi-Company: Native multi-company with inter-company transactions, consolidated financials.

- ✅ Multi-Branch: Supported via Cost Centers, Warehouses, and custom dimension tagging.

- ✅ Multi-Currency: Built-in multi-currency with exchange rate management and revaluation.

- ✅ Multi-Language: 100+ languages supported via translation files; UI fully translatable.

- ✅ DB Scalability: MariaDB read replicas; ledger partitioning for large multi-company entities.

- ✅ Load Balancing: NGINX upstream configuration; Redis cluster for distributed caching.

- ✅ High Transaction Load: Separate web and job workers; heavy batch jobs run on dedicated background worker nodes.

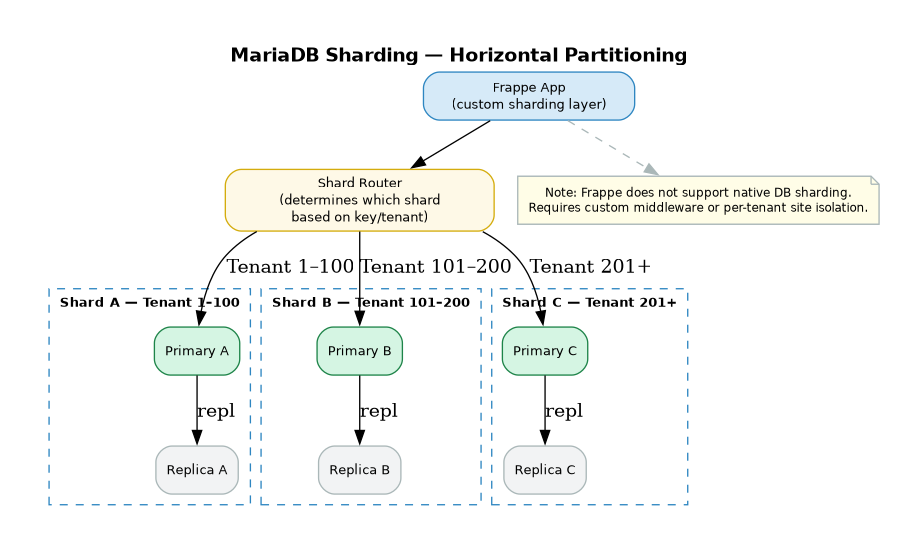

- ⚠️ DB Sharding: Not natively supported; large deployments use federated instances with Event Streaming (Apache Kafka integration).

- 🔧 Multi-Instance Sync: Event Streaming framework (v12+) enables cross-instance document sync.

Scalability Deep Dive

A. Compute Scalability — Kubernetes Horizontal Pod Autoscaler

Frappe workers (web + background) are stateless and horizontally scalable via Kubernetes HPA.

- Workers are stateless — new pods connect to shared MariaDB + Redis immediately

- Scale web workers and background job workers independently

- HPA metrics: CPU utilisation, memory pressure, or custom (e.g., Redis queue depth via KEDA)

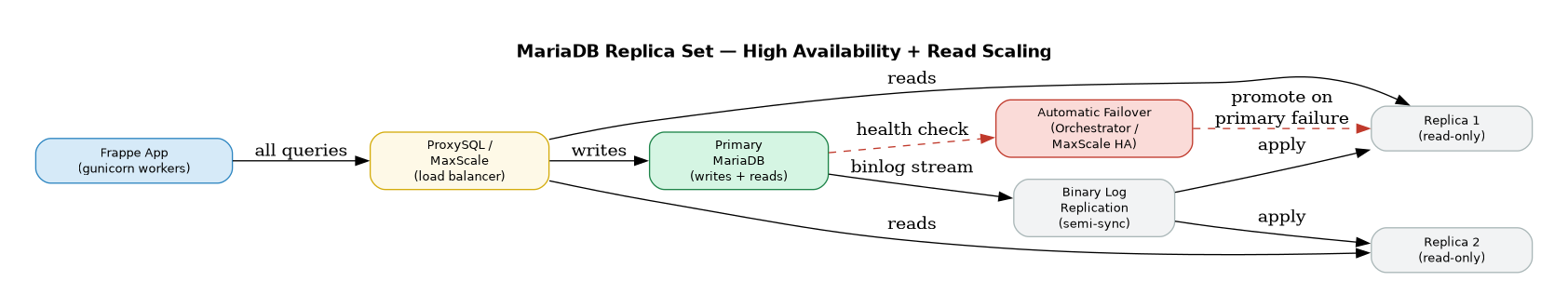

B. Database Scalability — MariaDB Read Replicas

Frappe supports read replica configuration to offload read-heavy queries from the primary DB.

- Configured via siteconfig.json: "readfrom_replica": 1 + replica DB connection string

- Replicas in same AZ (low latency) or different regions (geo-distribution)

- Significantly reduces primary DB load — better write throughput and stability

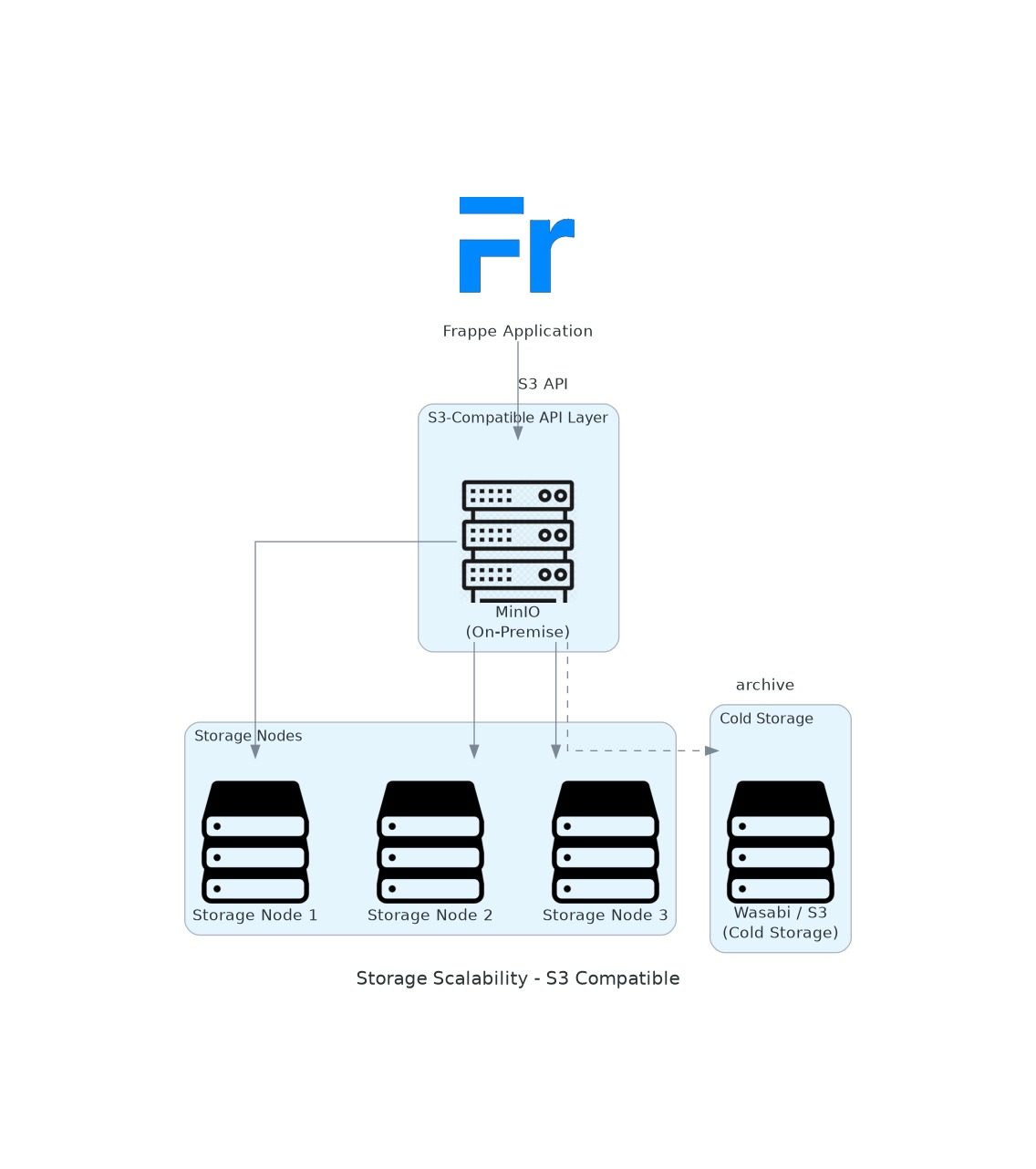

C. Storage Scalability — S3-Compliant API

Frappe's file storage supports any S3-compatible API — enabling horizontal storage scaling across cloud and on-premise.

- AWS S3: Configure via s3bucket, awsaccesskeyid, awssecretaccesskey, s3endpointurl in siteconfig.json

- Wasabi: Cold storage at ~80% lower cost than S3 — ideal for old attachments and backups

- Enables horizontal storage scaling independent of application or DB layer

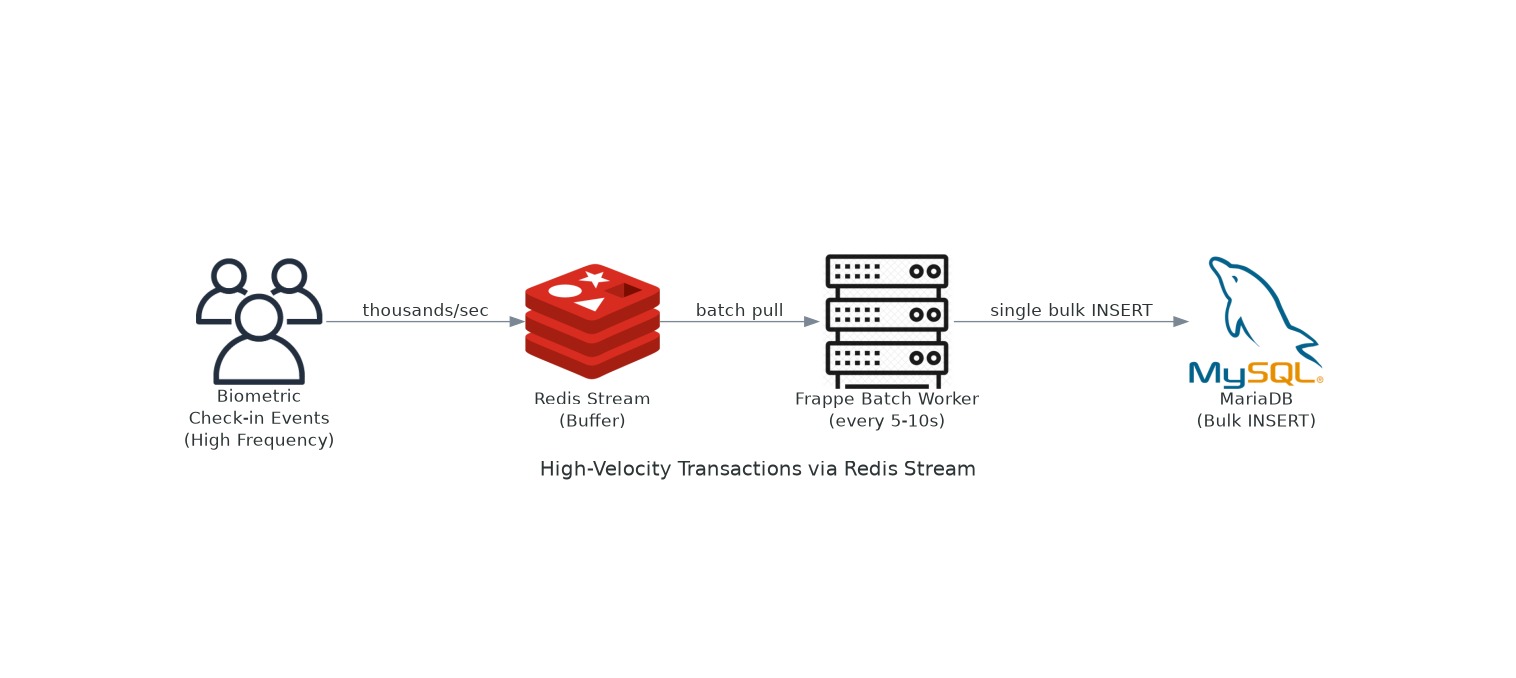

2a. High-Velocity Transaction Handling

Use Case: record biometric check-ins, attendance events, IoT sensor data — thousands of requests per second without overwhelming the database.

The Problem

Direct DB writes at high frequency (thousands/sec) will saturate MariaDB connections and degrade the entire system. A buffering pattern is required.

Detailed Architecture

Why This Pattern?

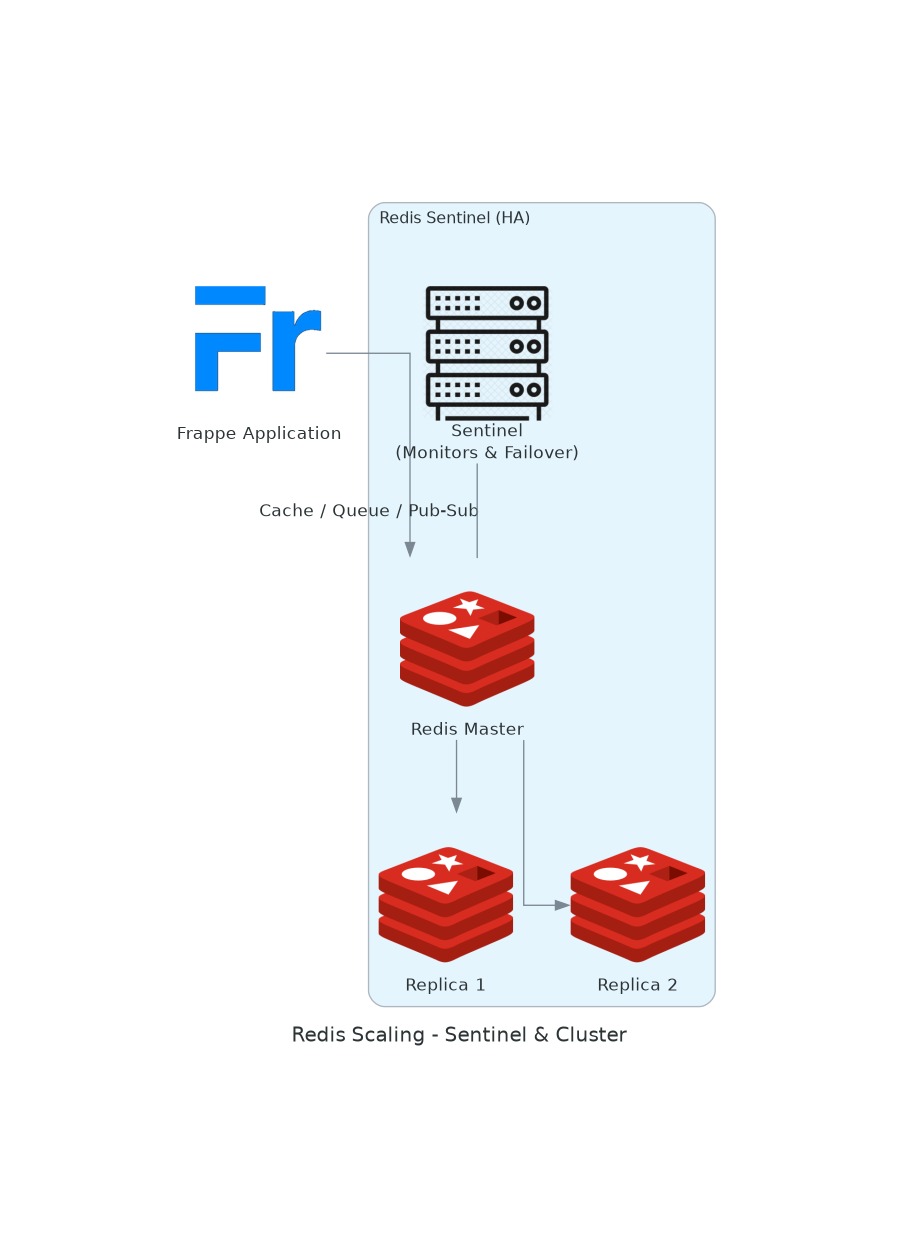

2b. Scaling Redis

Frappe relies on Redis for three distinct purposes:

- Cache — Frappe metadata, page cache, session cache (Frappe Caffeine in v16)

- Queue — RQ (Redis Queue) for all background/async job workers

- Real-time — Socket.IO pub/sub for live notifications and form updates

Redis Sentinel — High Availability (Automatic Failover)

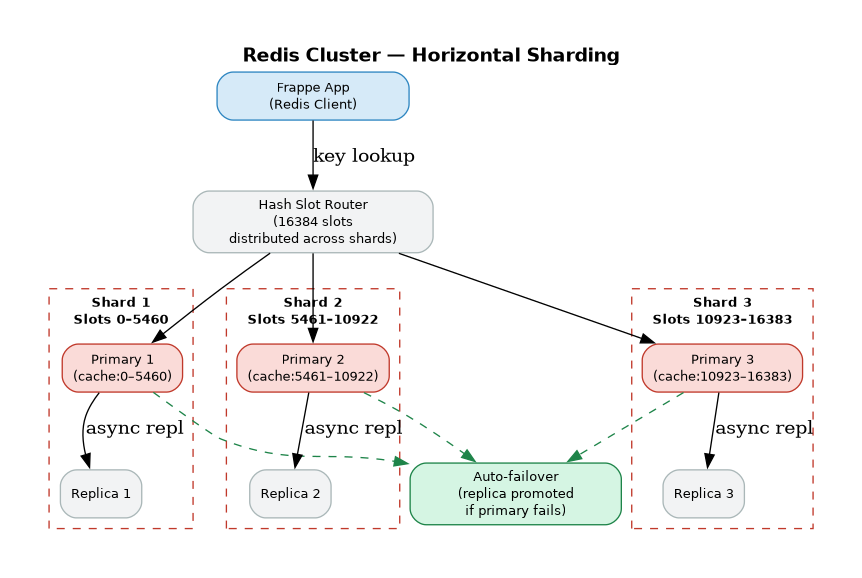

Redis Cluster — Horizontal Sharding

Frappe Redis Usage Summary

Recommendation for large deployments:

- Start with Redis Sentinel (3 sentinel + 1 primary + 2 replicas) for HA and automatic failover

- Move to Redis Cluster when single-node memory exceeds ~50GB or throughput becomes a bottleneck

- Keep cache, queue, and real-time on separate Redis instances for workload isolation

2c. Scaling MariaDB

Note: Frappe core uses MariaDB as its primary database. MariaDB is used in the Frappe ecosystem for auxiliary data — analytics, logs, unstructured documents, or custom apps where document-oriented storage is beneficial.

MariaDB Replica Set — High Availability + Read Scaling

MariaDB Sharding — Horizontal Partitioning

Use Cases in Frappe Ecosystem

2d. Load Balancing with Nginx + Gunicorn

Nginx acts as the reverse proxy, SSL terminator, static file server, and load balancer in front of multiple Gunicorn worker processes — the standard Frappe production architecture.

Architecture Diagram

Detailed Flow

Gunicorn Worker Sizing

- Formula: (2 × CPU cores) + 1 workers per server is a common starting point

- Worker class: geventwebsocket.gunicorn.workers.GeventWebSocketWorker for WebSocket support

- Timeout: 120s for long-running requests (reports, data imports)